Leela Chess Zero

Home * Engines * Leela Chess Zero

Leela Chess Zero, (LCZero, lc0)

an adaption of Gian-Carlo Pascutto's Leela Zero Go project [2] to Chess, initiated and announced by Stockfish co-author Gary Linscott, who was already responsible for the Stockfish Testing Framework called Fishtest. Leela Chess is open source, released under the terms of GPL version 3 or later, and supports UCI.

The goal is to build a strong chess playing entity following the same type of deep learning along with Monte-Carlo tree search (MCTS) techniques of AlphaZero as described in DeepMind's 2017 and 2018 papers

[3]

[4]

[5],

but using distributed training for the weights of the deep convolutional neural network (CNN, DNN, DCNN).

Contents

Lc0

Leela Chess Zero consists of an executable to play or analyze games, initially dubbed LCZero, soon rewritten by a team around Alexander Lyashuk for better performance and then called Lc0 [6] [7]. This executable, the actual chess engine, performs the MCTS and reads the self-taught CNN, which weights are persistent in a separate file. Lc0 is written in C++ (started with C++14 then upgraded to C++17) and may be compiled for various platforms and backends. Since deep CNN approaches are best suited to run massively in parallel on GPUs to perform all the floating point dot products for thousands of neurons, the preferred target platforms are Nvidia GPUs supporting CUDA and cuDNN libraries [8]. Ankan Banerjee wrote the cuDNN backend (also shared by Deus X and Allie [9]), and DX12 backend code. There exist different Lc0 backends to be used with different hardware, not all neural network architectures/features are supported on all backends. Different backends and different network architectures with different net size give different nodes per second and Elo. CPUs can be utilized for example via BLAS and DNNL and GPUs via CUDA, cuDNN, OpenCL, DX12, Metal, ONNX, oneDNN backends.

Description

Like AlphaZero, Lc0's evaluates positions using non-linear function approximation based on a deep neural network, rather than the linear function approximation as used in classical chess programs. This neural network takes the board position as input and outputs position evaluation (QValue) and a vector of move probabilities (PValue, policy). Once trained, these network is combined with a Monte-Carlo Tree Search (MCTS) using the policy to narrow down the search to highprobability moves, and using the value in conjunction with a fast rollout policy to evaluate positions in the tree. The MCTS selection is done by a variation of Rosin's UCT improvement dubbed PUCT (Predictor + UCT).

Board Representation

Lc0's color agnostic board is represented by five bitboards (own pieces, opponent pieces, orthogonal sliding pieces, diagonal sliding pieces, and pawns including en passant target information coded as pawns on rank 1 and 8), two king squares, castling rights, and a flag whether the board is color flipped. Getting individual piece bitboards requires some setwise operations such as intersection and set theoretic difference [10].

Network

While AlphaGo used two disjoint networks for policy and value, AlphaZero as well as Leela Chess Zero, share a common "body" connected to disjoint policy and value "heads". The “body” consists of spatial 8x8 planes, using B residual blocks with F filters of kernel size 3x3, stride 1. So far, model sizes FxB of 64x6, 128x10, 192x15, and 256x20 were used.

Concerning nodes per second of the MCTS, smaller models are faster to calculate than larger models. They are faster to train and will earlier recognize progress, but they will also saturate earlier so that at some point more training will no longer improve the engine. Larger and deeper network models will improve the receptivity, the amount of knowledge and pattern to extract from the training samples, with potential for a stronger engine. As a further improvement, Leele Chess Zero applies the Squeeze and Excite (SE) extension to the residual block architecture [11] [12]. The body is connected to both the policy "head" for the move probability distribution, and the value "head" for the evaluation score aka winning probability of the current position and up to seven predecessor positions on the input planes.

Training

Like in AlphaZero, the Zero suffix implies no other initial knowledge than the rules of the game, to build a superhuman player, starting with truly random self-play games to apply reinforcement learning based on the outcome of that games. However, there are derived approaches, such as Albert Silver's Deus X, trying to take a short-cut by initially using supervised learning techniques, such as feeding in high quality games played by other strong chess playing entities, or huge records of positions with a given preferred move. The unsupervised training of the NN is about to minimize the L2-norm of the mean squared error loss of the value output and the policy loss. Further there are experiments to train the value head against not the game outcome, but against the accumulated value for a position after exploring some number of nodes with UCT [13].

The distributed training is realized with an sophisticated client-server model. The client, written entirely in the Go programming language, incorporates Lc0 to produce self-play games.Controlled by the server, the client may download the latest network, will start self-playing, and uploading games to the server, who on the other hand will regularly produce and distribute new neural network weights after a certain amount of games available from contributors. The training software consists of Python code, the pipeline requires NumPy and TensorFlow running on Linux [14]. The server is written in Go along with Python and shell scripts.

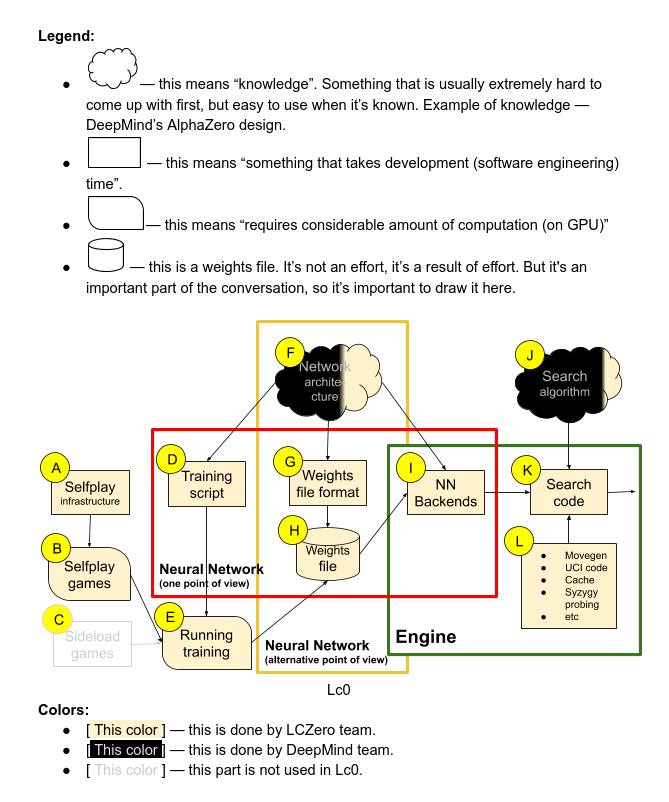

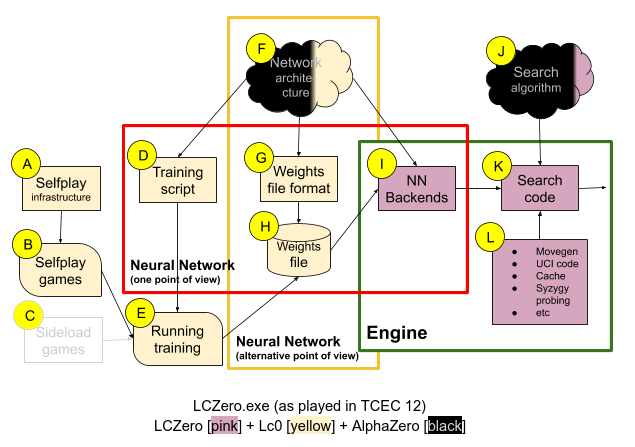

Structure Diagrams

Related to TCEC clone discussions concerning Deus X and Allie aka AllieStein,

Alexander Lyashuk published diagrams with all components of the affected engines,

The above shows the common legend, and the structure of all Leela Chess Zero's components based on current Lc0 engine [15]

Same diagram, but initial LCZero engine, which played TCEC Season 12 [16]

Tournament Play

First Experience

LCZero gained first tournament experience in April 2018 at TCEC Season 12 and over the board at the WCCC 2018 in Stockholm, July 2018. It won the fourth division of TCEC Season 13 in August 2018, Lc0 finally coming in third in the third division.

Breakthrough

TCEC Season 14 from November 2018 until February 2019 became a breakthrough, Lc0 winning the third, second and first division, to even qualify for the superfinal, losing by the narrow margin of +10 =81 -9, 50½ - 49½ versus Stockfish. Again runner-up at the TCEC Season 15 premier division in April 2019, Lc0 aka LCZero v0.21.1-nT40.T8.610 won the superfinal in May 2019 versus Stockfish with +14 =79 -7, 53½-46½ [17]. At the TCEC Season 16 premier division in September 2019, Lc0 became in third behind Stockfish and the supervised trained AllieStein, but Lc0 took revenge by winning the TCEC Season 17 premier division in spring 2020, as LCZero v0.24-sv-t60-3010 fighting down Stockfish in a thrilling superfinal in April 2020 with +17 =71 -12, 52½-47½ [18], but tables turned again in TCEC Season 18, when Stockfish won the superfinal.

Release Dates

2018

- Leela Chess Zero / Lc0 v0.14.1 - July 08, 2018

- Leela Chess Zero / Lc0 v0.16.0 - July 20, 2018

- Leela Chess Zero / Lc0 v0.17.0 - August 27, 2018

- Leela Chess Zero / Lc0 v0.18.0 - September 30, 2018

- Leela Chess Zero / Lc0 v0.18.1 - October 02, 2018

- Leela Chess Zero / Lc0 v0.19.0 - November 19, 2018

- Leela Chess Zero / Lc0 v0.19.1.1 - December 10, 2018

2019

- Leela Chess Zero / Lc0 v0.20.9 - January 01, 2019

- Leela Chess Zero / Lc0 v0.20.1 - January 07, 2019

- Leela Chess Zero / Lc0 v0.20.2 - February 01, 2019

- Leela Chess Zero / Lc0 v0.21.1 - March 23, 2019

- Leela Chess Zero / Lc0 v0.21.2 - June 09, 2019

- Leela Chess Zero / Lc0 v0.21.4 - July 28, 2019

- Leela Chess Zero / Lc0 v0.22.0 - August 05, 2019

- Leela Chess Zero / Lc0 v0.23.2 - December 31, 2019

2020

- Leela Chess Zero / Lc0 v0.23.3 - February 18, 2020

- Leela Chess Zero / Lc0 v0.24.1 - March 15, 2020

- Leela Chess Zero / Lc0 v0.25.1 - April 30, 2020

- Leela Chess Zero / Lc0 v0.26.0 - July 02, 2020

- Leela Chess Zero / Lc0 v0.26.1 - July 15, 2020

- Leela Chess Zero / Lc0 v0.26.2 - September 02, 2020

- Leela Chess Zero / Lc0 v0.26.3 - October 10, 2020

2021

- Leela Chess Zero / Lc0 v0.27.0 - February 21, 2021

- Leela Chess Zero / Lc0 v0.28.0 - August 26, 2021

- Leela Chess Zero / Lc0 v0.28.2 - December 13, 2021

2022

- Leela Chess Zero / Lc0 v0.29.0 - December 13, 2022

2023

- Leela Chess Zero / Lc0 v0.30.0 - July 21, 2023

Authors

See also

- Allie

- AlphaZero

- Ceres

- Fat Fritz

- Deep Learning

- Deus X

- Leela Zero

- Leila

- Maia Chess

- Monte-Carlo Tree Search

Publications

- Bill Jordan (2020). Calculation versus Intuition: Stockfish versus Leela. amazon » TCEC, Stockfish

- Dominik Klein (2021). Neural Networks For Chess. Release Version 1.1 · GitHub [19]

- Rejwana Haque, Ting Han Wei, Martin Müller (2021). On the Road to Perfection? Evaluating Leela Chess Zero Against Endgame Tablebases. Advances in Computer Games 17

Forum Posts

2018

- Announcing lczero by Gary, CCC, January 09, 2018

- Re: Announcing lczero by Daniel Shawul, CCC, January 21, 2018 » Rollout Paradigm

- Policy and value heads are from AlphaGo Zero, not Alpha Zero Issue #47 by Gian-Carlo Pascutto, glinscott/leela-chess · GitHub, January 24, 2018

- LCZero is learning by Gary, CCC, January 30, 2018

- LCZero update by Gary, CCC, March 14, 2018

- LCZero update (2) by Rein Halbersma, CCC, March 25, 2018

- LCZero: Progress and Scaling. Relation to CCRL Elo by Kai Laskos, CCC, March 28, 2018 » Playing Strength

- What does LCzero learn? by Uri Blass, CCC, April 05, 2018

- How to play vs LCZero with Cute Chess gui by Hai, CCC, April 08, 2018 » Cute Chess

- LCZero in Aquarium / Fritz by Carl Bicknell, CCC, April 11, 2018

- LCZero on 10x128 now by Gary, CCC, April 12, 2018

- lczero faq by Duncan Roberts, CCC, April 13, 2018

- Run LC Zero in LittleBlitzerGUI by Stefan Pohl, CCC, April 14, 2018 » LittleBlitzer

- LC0 - how to catch up? by Srdja Matovic, CCC, April 16, 2018

- Leela on more then one GPU? by Karlo Balla, CCC, May 01, 2018

- GPU contention by Ian Kennedy, CCC, May 07, 2018 » GPU

- New CLOP settings give Leela huge tactics boost by Albert Silver, CCC, June 04, 2018 » CLOP

- First Win by Leela Chess Zero against Stockfish dev by Ankan Banerjee, CCC, June 07, 2018 » Stockfish

- what may be two firsts... by Michael B, CCC, June 13, 2018 » DGT Pi

- LcZero and STS by Ed Schröder, CCC, June 14, 2018 » Strategic Test Suite

- Who entered Leela into WCCC? Bad idea!! by Chris Whittington, LCZero Forum, June 23, 2018 » WCCC 2018

- how will Leela fare at the WCCC? by dannyb, CCC, July 10, 2018 » WCCC 2018

- Lc0 will participate at the WCCC? Wow! by Martin Renneke, LCZero Forum, July 10, 2018

- How Leela uses history planes by Tristrom Cooke, LCZero Forum, July 19, 2018

- Why Lc0 eval (in cp) is asymmetric against AB engines? by Kai Laskos, CCC, July 25, 2018 » Asymmetric Evaluation, Pawn Advantage, Win Percentage, and Elo

- TCEC season 13, 2 NN engines will be participating, Leela and Deus X by Nay Lin Tun, CCC, July 28, 2018

- Re: TCEC season 13, 2 NN engines will be participating, Leela and Deus X by Gian-Carlo Pascutto, CCC, August 03, 2018

- Has Silver written any code for "his" ZeusX? by Chris Whittington, LCZero Forum, July 31, 2018

- Re: Has Silver written any code for "his" ZeusX? by Alexander Lyashuk, LCZero Forum, August 02, 2018

- Some properties of Lc0 playing by Kai Laskos, CCC, September 14, 2018

- How good is the RTX 2080 Ti for Leela? by Hai, September 15, 2018 [20]

- Re: How good is the RTX 2080 Ti for Leela? by Ankan Banerjee, CCC, September 16, 2018

- Re: How good is the RTX 2080 Ti for Leela? by Ankan Banerjee, CCC, September 17, 2018 [21]

- Re: How good is the RTX 2080 Ti for Leela? by Ankan Banerjee, CCC, October 28, 2018

- Re: How good is the RTX 2080 Ti for Leela? by Ankan Banerjee, CCC, November 15, 2018

- LC0 0.18rc1 released by Günther Simon, CCC, September 25, 2018

- My non-OC RTX 2070 is very fast with Lc0 by Kai Laskos, CCC, November 19, 2018 [22]

- 2900 Elo points progress, 10 million games, 330 nets by Kai Laskos, CCC, November 25, 2018

- Re: 2900 Elo points progress, 10 million games, 330 nets by crem, CCC, November 25, 2018

- Scaling of Lc0 at high Leela Ratio by Kai Laskos, CCC, November 27, 2018

- Re: Alphazero news by Gian-Carlo Pascutto, CCC, December 07, 2018 » AlphaZero

- Policy training in Alpha Zero, LC0 .. by Chris Whittington, CCC, December 18, 2018 » AlphaZero

- LC0 using 4 x 2080 Ti GPU's on Chess.com tourney? by M. Ansari, CCC, December 28, 2018

- Smallnet (128x10) run1 progresses remarkably well by Kai Laskos, CCC, December 19, 2018

- use multiple neural nets? by Warren D. Smith, LCZero Forum, December 25, 2018

2019

- LCZero FAQ is missing one important fact by Jouni Uski, CCC, January 01, 2019 » GPU

- "boosting" endgames in leela training? Simple change to learning process proposed: "forked" training games by Warren D. Smith, LCZero Forum, January 03, 2019

- Leela on a weak pc, question by chessico, CCC, January 09, 2019

- Michael Larabel benches lc0 on various GPUs by Warren D. Smith, LCZero Forum, January 14, 2019 » GPU

- Can somebody explain what makes Leela as strong as Komodo? by Chessqueen, CCC, January 16, 2019

- A0 policy head ambiguity by Daniel Shawul, CCC, January 21, 2019 » AlphaZero

- Schizophrenic rating model for Leela by Kai Laskos, CCC, January 21, 2019 » Match Statistics

- Leela Zero (Lc0) - NVIDIA Geforce RTX 2060 by Andreas Strangmüller, CSS Forum, January 29, 2019

- 11258-32x4-se distilled network released by Dietrich Kappe, CCC, February 03, 2019

- Lc0 setup Hilfe by Clemens Keck, CSS Forum, February 07, 2019 (German)

- Lc0 - macOS binary requested by Steppenwolf, CCC, February 09, 2019 » Mac OS

- Thanks for LC0 by Peter Berger, CCC, February 19, 2019

- Re: Training the trainer: how is it done for Stockfish? by Graham Jones, CCC, March 03, 2019 » Monte-Carlo Tree Search, Stockfish

- Lc0 51010 by Larry Kaufman, CCC, March 29, 2019

- Using LC0 with one or two GPUs - a guide by Srdja Matovic, CCC, March 30, 2019 » GPU

- 32930 Boost network available by Dietrich Kappe, CCC, April 09, 2019

- Lc0 question by Larry Kaufman, CCC, July 06, 2019

- Some newbie questions about lc0 by Nguyen Pham, CCC, August 25, 2019

- Lc0 Evaluation Explanation by Hamster, CCC, August 29, 2019

- Re: Lc0 Evaluation Explanation by Alexander Lyashuk, CCC, September 03, 2019

- My failed attempt to change TCEC NN clone rules by Alexander Lyashuk, CCC, September 14, 2019 » TCEC

- Best Nets for Lc0 Page by Ted Summers, CCC, December 23, 2019

- Correct LC0 syntax for multiple GPUs by Dann Corbit, CCC, December 30, 2019

2020

- Lc0 soon to support chess960 ? by Modern Times, CCC, April 18, 2020 » Chess960

- How to run rtx 2080ti for leela optimally? by h1a8, CCC, April 20, 2020

- Total noob Leela question by Harm Geert Muller, CCC, June 03, 2020

- How strong is Stockfish NNUE compared to Leela.. by OmenhoteppIV, LCZero Forum, July 13, 2020 » Stockfish NNUE

- LC0 vs. NNUE - some tech details... by Srdja Matovic, CCC, July 29, 2020 » NNUE

- The next step for LC0? by Srdja Matovic, CCC, August 28, 2020

- Checking the backends with the new lc0 binary by Kai Laskos, CCC, October 01, 2020

- ZZ-tune conclusively better than the Kiudee default for Lc0 by Kai Laskos, CCC, December 01, 2020 [23]

2021

- Announcing Ceres by crem, LCZero blog, January 01, 2021 » Ceres

- leela by Stuart Cracraft, CCC, March 29, 2021 » Banksia GUI, iPhone

- Joking FTW, Seriously by borg, LCZero blog, April 25, 2021

- The importance of open data by borg, LCZero blog, June 15, 2021

- Leela data publicly available for use by Madeleine Birchfield, CCC, June 15, 2021

- will Tcec allow Stockfish with a Leela net to play? by Wilson, CCC, June 17, 2021 » TCEC Season 21

External Links

Chess Engine

- Leela Chess Zero

- Blog - Leela Chess Zero

- LCZero – Forum

- Testing instance of LCZero server

- Leela Chess Zero from Wikipedia

- Leela (software) from Wikipedia

- Leela Chess Zero - Facebook

- Leela Chess Zero (@LeelaChessZero) | Twitter

- Lc0 Slides by Alexander Lyashuk

GitHub

- LCZero · GitHub

- GitHub - LeelaChessZero/lczero: A chess adaption of GCP's Leela Zero

- GitHub - LeelaChessZero/lc0: The rewritten engine, originally for tensorflow. Now all other backends have been ported here

- Home · LeelaChessZero/lc0 Wiki · GitHub

- Getting Started · LeelaChessZero/lc0 Wiki · GitHub

- FAQ · LeelaChessZero/lc0 Wiki · GitHub

- Technical Explanation of Leela Chess Zero · LeelaChessZero/lc0 Wiki · GitHub

- Contributors to LeelaChessZero/lc0 · GitHub

- Use NHCW layout for fused winograd residual block (#1567) · LeelaChessZero/lc0@62741d5 · GitHub, commit by Ankan Banerjee, June 10, 2021

- GitHub - mooskagh/lc0: The rewritten engine, originally for cudnn. Now all other backends have been ported here

- Distilled Networks · dkappe/leela-chess-weights Wiki · GitHub

Rating Lists

ChessBase

- Leela Chess Zero: AlphaZero for the PC by Albert Silver, ChessBase News, April 26, 2018

- Standing on the shoulders of giants by Albert Silver, ChessBase News, September 18, 2019

- Running Leela and Fat Fritz on your notebook by Evelyn Zhu, ChessBase News, June 14, 2020 » Fat Fritz

Chessdom

- Interview with Alexander Lyashuk about the recent success of Lc0, Chessdom, February 6, 2019 » TCEC Season 14

Tuning

- GitHub - kiudee/bayes-skopt: A fully Bayesian implementation of sequential model-based optimization by Karlson Pfannschmidt » Fat Fritz [24]

- GitHub - kiudee/chess-tuning-tools by Karlson Pfannschmidt [25]

Misc

- Leela from Wikipedia

- Leela (game) from Wikipedia

- Leela (name) from Wikipedia

- Leela (Doctor Who) from Wikipedia

- Leela (Futurama) from Wikipedia

- Soft Machine - Hidden Details, 2018, YouTube Video

- Lineup: Theo Travis, Roy Babbington, John Etheridge, John Marshall

References

- ↑ Leela Chess Zero (@LeelaChessZero) | Twitter

- ↑ GitHub - gcp/leela-zero: Go engine with no human-provided knowledge, modeled after the AlphaGo Zero paper

- ↑ David Silver, Thomas Hubert, Julian Schrittwieser, Ioannis Antonoglou, Matthew Lai, Arthur Guez, Marc Lanctot, Laurent Sifre, Dharshan Kumaran, Thore Graepel, Timothy Lillicrap, Karen Simonyan, Demis Hassabis (2017). Mastering Chess and Shogi by Self-Play with a General Reinforcement Learning Algorithm. arXiv:1712.01815

- ↑ David Silver, Thomas Hubert, Julian Schrittwieser, Ioannis Antonoglou, Matthew Lai, Arthur Guez, Marc Lanctot, Laurent Sifre, Dharshan Kumaran, Thore Graepel, Timothy Lillicrap, Karen Simonyan, Demis Hassabis (2018). A general reinforcement learning algorithm that masters chess, shogi, and Go through self-play. Science, Vol. 362, No. 6419

- ↑ AlphaZero paper, and Lc0 v0.19.1 by crem, LCZero blog, December 07, 2018

- ↑ lc0 transition · LeelaChessZero/lc0 Wiki · GitHub

- ↑ Re: TCEC season 13, 2 NN engines will be participating, Leela and Deus X by Gian-Carlo Pascutto, CCC, August 03, 2018

- ↑ NVIDIA cuDNN | NVIDIA Developer

- ↑ Re: My failed attempt to change TCEC NN clone rules by Adam Treat, CCC, September 19, 2019

- ↑ lc0/board.h at master · LeelaChessZero/lc0 · GitHub

- ↑ Technical Explanation of Leela Chess Zero · LeelaChessZero/lc0 Wiki · GitHub

- ↑ Squeeze-and-Excitation Networks – Towards Data Science by Paul-Louis Pröve, October 17, 2017

- ↑ Lessons From AlphaZero (part 4): Improving the Training Target by Vish Abrams, Oracle Blog, June 27, 2018

- ↑ GitHub - LeelaChessZero/lczero-training: For code etc relating to the network training process.

- ↑ My failed attempt to change TCEC NN clone rules by Alexander Lyashuk, CCC, September 14, 2019 » TCEC

- ↑ My failed attempt to change TCEC NN clone rules by Alexander Lyashuk, CCC, September 14, 2019 » TCEC

- ↑ Lc0 won TCEC 15 by crem, LCZero blog, May 28, 2019

- ↑ TCEC S17 SUper FInal report by @glbchess64, LCZero blog, April 21, 2020

- ↑ Book about Neural Networks for Chess by dkl, CCC, September 29, 2021

- ↑ GeForce 20 series from Wikipedia

- ↑ Multiply–accumulate operation - Wikipedia

- ↑ GeForce RTX 2070 Graphics Card | NVIDIA

- ↑ GitHub - kiudee/chess-tuning-tools by Karlson Pfannschmidt

- ↑ Fat Fritz 1.1 update and a small gift by Albert Silver. ChessBase News, March 05, 2020

- ↑ Welcome to Chess Tuning Tools’s documentation!